Multitask Modeling using OpenDelta¶

Multitask Serving with Delta-tuning

A huge advange of Delta-tuning is that it can be used for multitask serving. Imagine we have a pretrained model trained on a mix of data coming from multiple languages, e.g.,English, Chinese, and French. Now you want to have seperate models that specialise in Chinese, French, English. We can thus delta-tune three deltas on each language with small amount of additional language-specific data. During serving, when a Chinese sentence comes, you attach the “Chinese Delta”, and next a French sentence comes, you detach the “Chinese Delta”, and attach a “French Delta”.

Here is how to achieve multitask serving using OpenDelta.

from transformers import AutoModelForSequenceClassification

model = AutoModelForSequenceClassification.from_pretrained("facebook/bart-base")

from opendelta import LoraModel

delta_model = LoraModel(backbone_model=model, modified_modules=['fc2'])

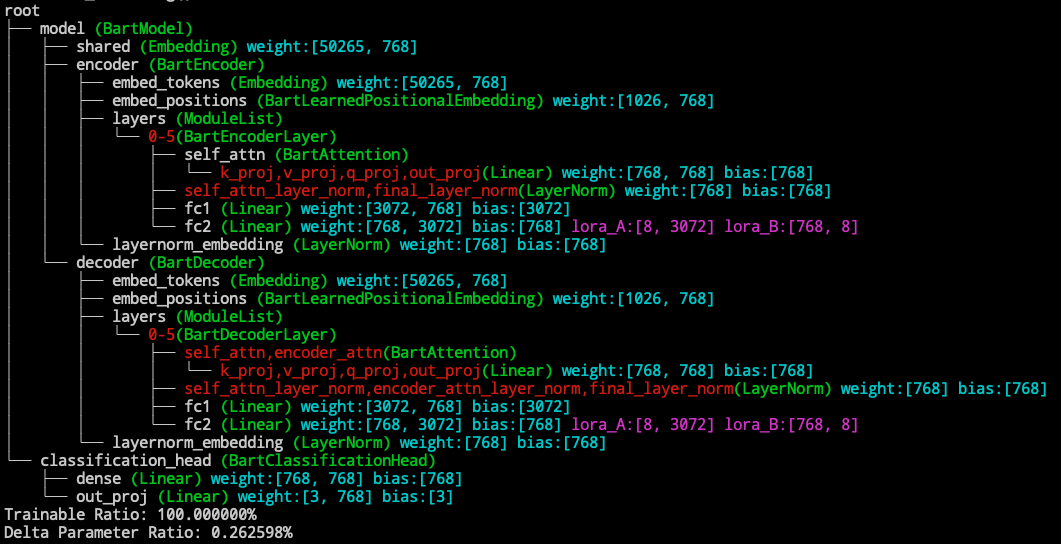

delta_model.log()

Now we detach the deltas from the backbone

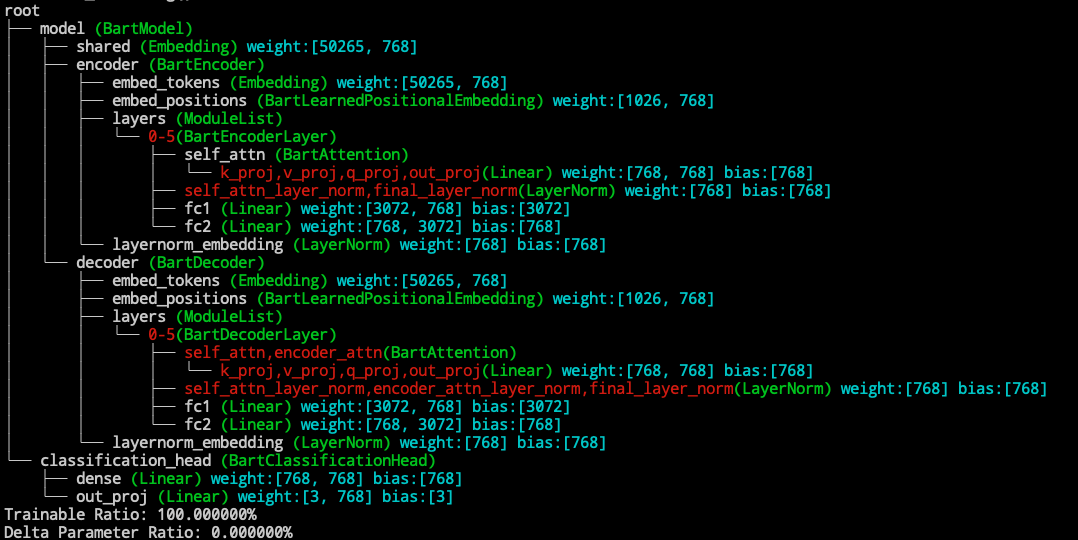

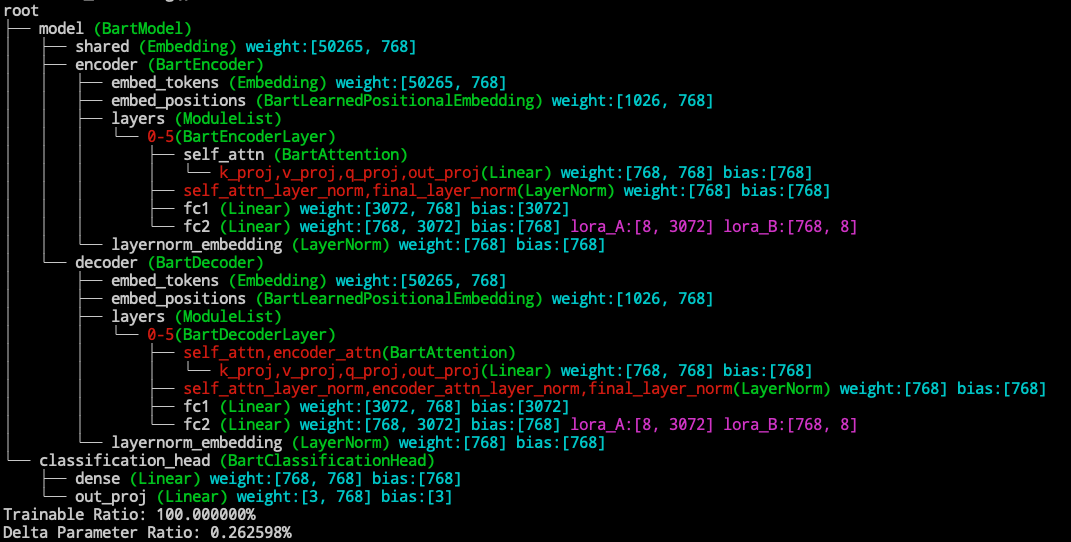

delta_model.detach()

delta_model.log()

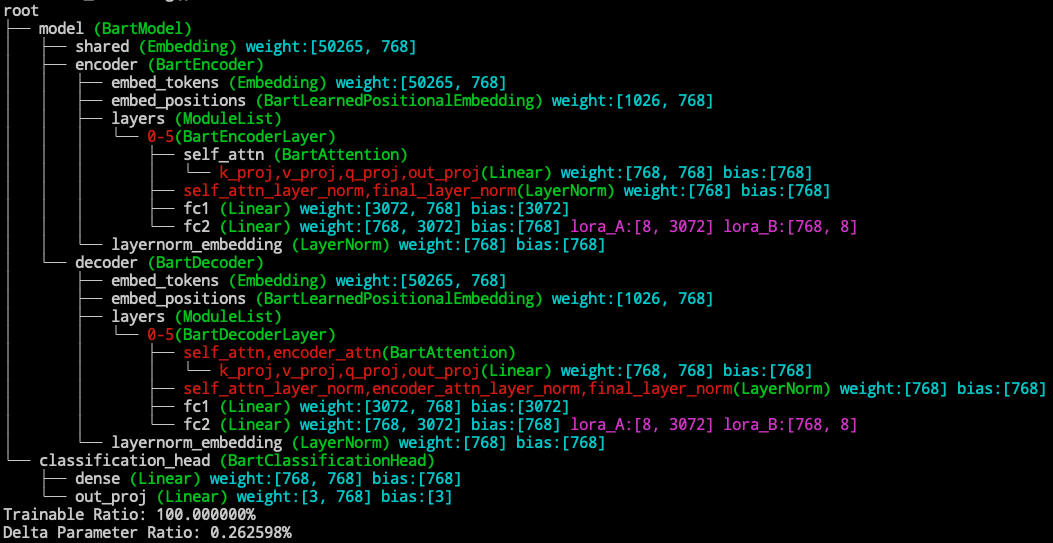

We can reattach the deltas to the backbone

delta_model.attach()

delta_model.log()

Independence of Different Delta Models

Different delta models will be independent in detaching and attaching. (But the visualization will not show all deltas in the backbone model.)

# continue from the above example

from opendelta import AdapterModel

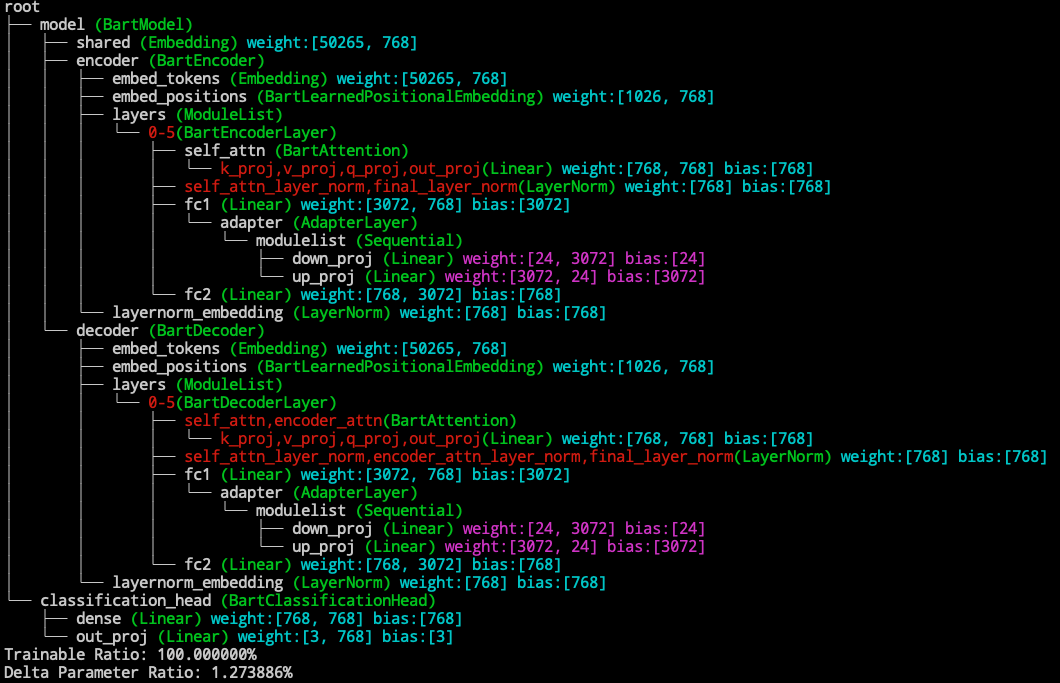

delta_model2 = AdapterModel(backbone_model=model, modified_modules=['fc1'])

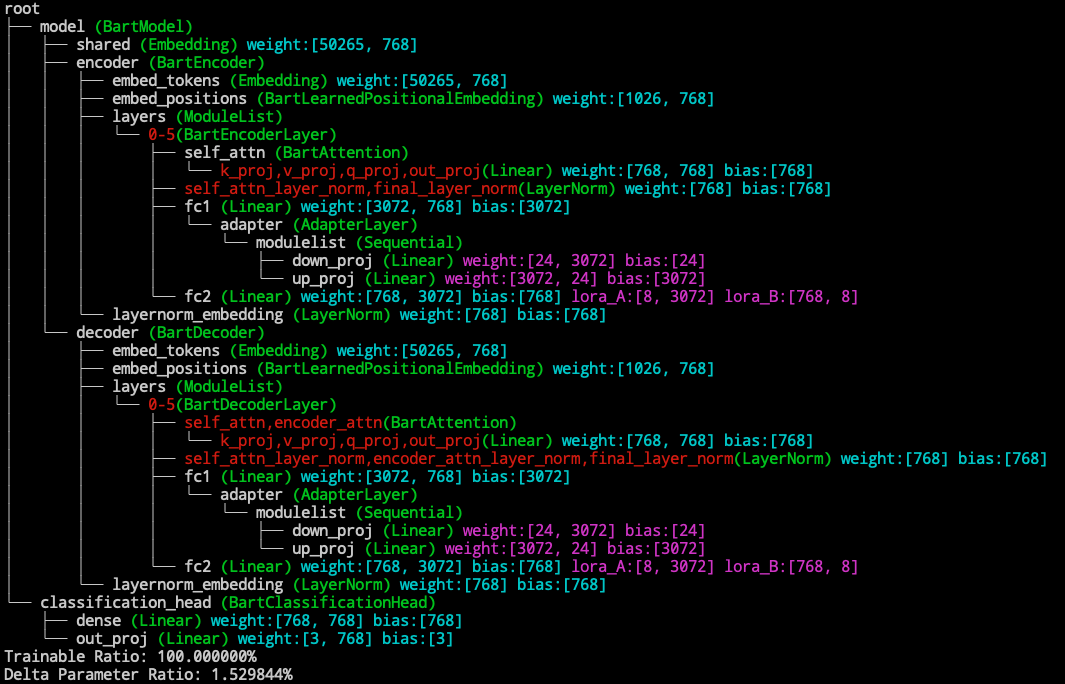

delta_model2.log()

detach the lora delta

delta_model.detach() # detach the lora delta

delta_model.log()

detach the adapter delta and reattach the lora delta

delta_model2.detach() # detach the adapter delta

delta_model.attach() # reattach the lora delta

delta_model.log()